|

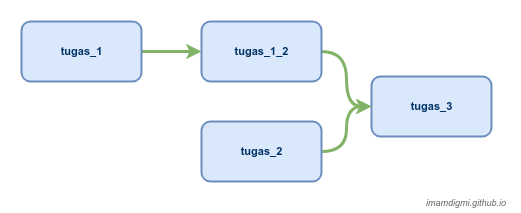

# The DAG object we'll need this to instantiate a DAGįrom airflow.operators. Our python script’s contents are reproduced below (to check for syntax issues just run the py file on the commandline): # Starting in Airflow 2.0, trying to overwrite a task will raise an exception. Users/theja/miniconda3/envs/datasci-dev/lib/python3.7/site-packages/airflow/models/dag.py:1342: PendingDeprecationWarning: The requested task could not be added to the DAG because a task with task_id create_tag_template_field_result is already in the DAG. DAG: Directed acyclic graph, a set of tasks with explicit execution order, beginning, and end DAG run: individual execution/run of a DAG Debunking the DAG. raw, I’ll directly pass it to the load function and save it to 3. The Complete Hands-On Introduction to Apache AirflowLearn to author, schedule and monitor data pipelines through practical examples using Apache AirflowRating: 4.5 out of 58825 reviews3.5 total hours103 lecturesAll LevelsCurrent price: 14.99Original price: 79.99. In this guide, I will not use this folder. transform is the place to store extracted or transformed data if you’re going to perform sink. INFO - Filling up the DagBag from /Users/theja/airflow/dags 0.raw is the place to store initial data sources. # visit localhost:8080 in the browser and enable the example dag in the home pageįor instance, when you start the webserver, you should seen an output similar to below: (datasci-dev) ttmac:lec05 theja$ airflow webserver -p 8080 # start the web server, default port is 8080

# but you can lay foundation somewhere else if you prefer But the two tools handle different parts of that workflow: Airflow helps orchestrate jobs that extract data, load it into a warehouse, and handle machine-learning processes. This initiates, webserver, scheduler, triggerer & postgres in a container. Airflow and dbt share the same high-level purpose: to help teams deliver reliable data to the people they work with, using a common interface to collaborate on that work. From the quickstart page # airflow needs a home, ~/airflow is the default, In terminal, I run the command docker-compose up -d. Every task in a Airflow DAG is defined by the operator (we will dive into more details soon) and has its own taskid that has to be unique within a DAG. Lets install the airflow package and get a server running.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed